Anyone interested in Capitol Hill’s ongoing debate about internet regulation should take five minutes to listen to what Rep. Cathy McMorris-Rodgers said at last week’s House Energy and Commerce Committee hearing.

The committee was discussing Section 230 of the Communications Decency Act of 1996, which helps preserve citizen’s rights to express their beliefs online. Rep. McMorris-Rodgers emphasized the crucial points that get lost in the misguided rhetoric from both sides of the aisle.

There have been some calls—from both sides of the aisle—for a Big Government to mandate or dictate free speech or ensure fairness online. These proposals are not consistent with the #FirstAmendment. pic.twitter.com/wNVnf2DJ87

— CathyMcMorrisRodgers (@cathymcmorris) October 16, 2019

Driving this debate is the question of accountability. Who should be responsible for content posted online? Is it the person who posted it or the platform on which they posted? And how should online content be moderated?

Current law answers the first two questions.

“Section 230 preserves that individuals, not the tools they use, are legally responsible for harmful behavior online,” stated Americans for Prosperity Senior Tech Policy Analyst Billy Easley, echoing Rep. McMorris-Rodgers. “Section 230 is not a shield for those who break the law online and the Department of Justice can still prosecute anyone who violates federal criminal law, whether on a website or not.”

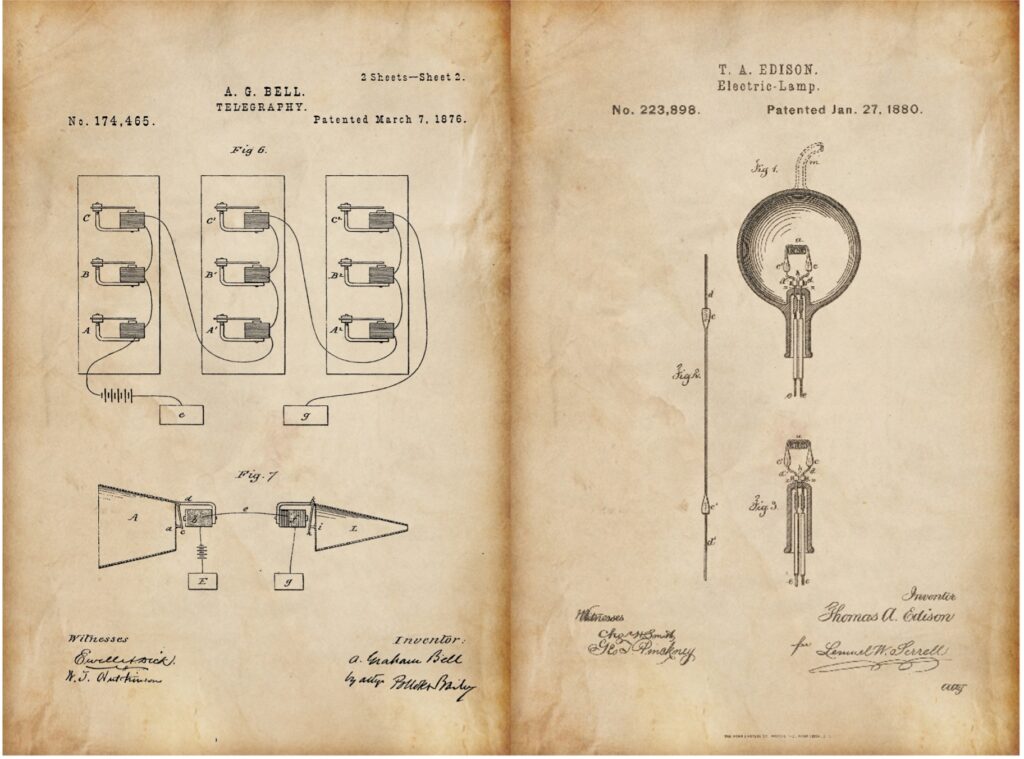

And that’s how it should be. By protecting smaller services and startups from potentially crushing liability, Section 230 is encouraging online platforms to continue innovating. Defending against excessive, meritless lawsuits can be expensive and could shutter the next generation of groundbreaking online platforms before they have a chance to create value.

The correct answer to the third question remains to be seen. As technology advances, engineers will continue to develop methods of removing inappropriate, harmful and offensive content.

But there’s certainly a wrong answer, which some politicians — both Republican and Democrat — seem too eager to propose: A government mandated solution to policing speech online. As we’ve seen in a variety of policy areas, these types of top-down approaches only fail the country.

“It shouldn’t be the FCC, FTC or any government agency’s job to moderate free speech online,” Rep. McMorris-Rodgers said.

As Easley noted, a policy approach involving heavy-handed regulation from political powers, such as the Federal Communications Commission and the Federal Trade Commission, “sets up a scenario where political powers dictate what is fair or unfair when it comes to free expression online.”

Eroding Section 230 protections jeopardizes all the good social media has done. People are more connected now than ever — to close friends, distant relatives, those who share our interests that we might never meet in person. Websites that host user-generated content help us find jobs, carpools, reviews on products and services, and so much more.

“Misguided and hasty attempts to amend or even repeal Section 230 for bias or other reasons,” Rep. McMorris-Rodgers said, “could have unintended consequences for free speech and the ability for small businesses to provide new and innovative services.”